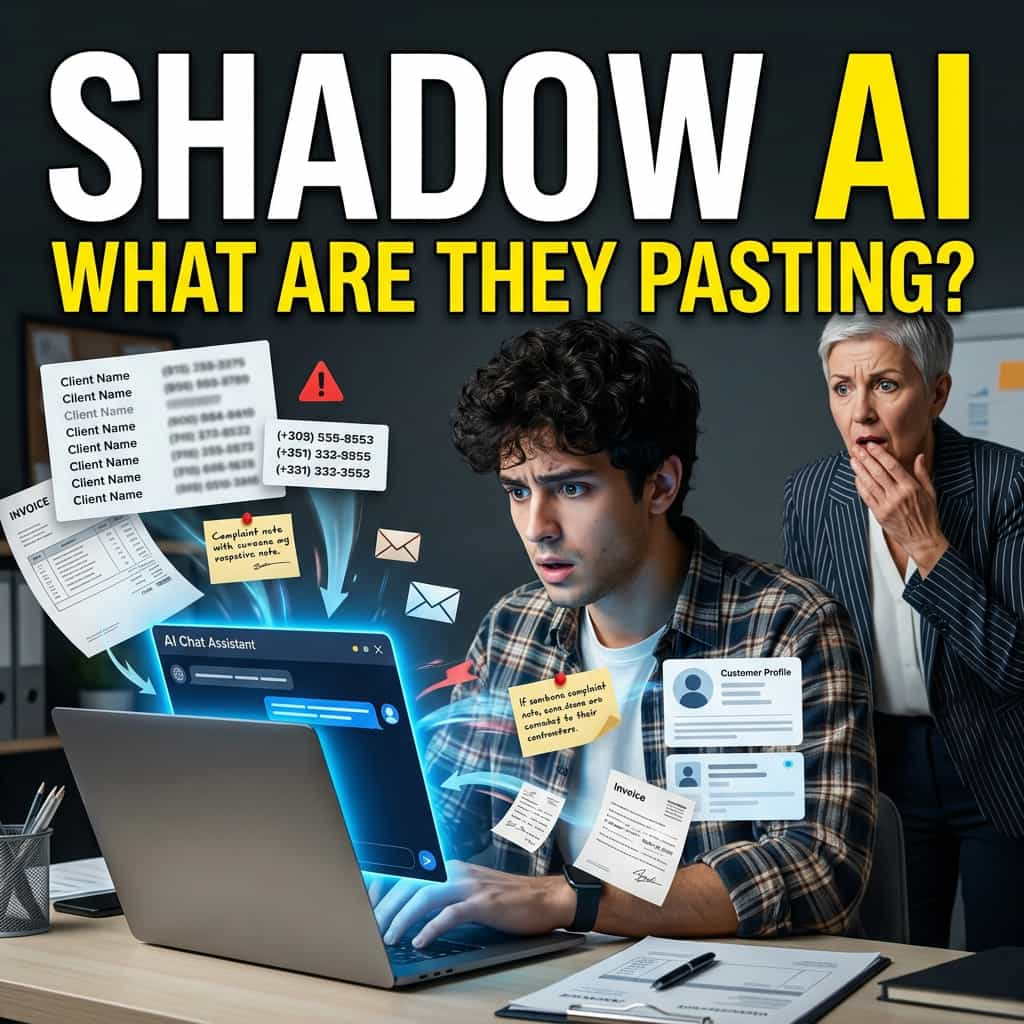

Janet has worked with you for four years. She is the one who keeps the place running on Tuesdays when you are out. Three months ago she discovered that ChatGPT could draft replies to client emails in half the time it used to take her. She has been using it every day since. You did not know. She also does not know that the customer names, phone numbers, and complaint details she pastes in are being processed by a US company that may use them to train the next version of the tool.

This is not Janet's fault. Nobody told her anything. The same scene is playing out in tens of thousands of small businesses across Australia right now.

It has a name. Shadow AI. And new research from IBM puts a number on what it costs.

What the IBM research actually found

In July 2025, IBM released its 20th annual Cost of a Data Breach Report, prepared with the Ponemon Institute, based on data from 600 organisations breached between March 2024 and February 2025. For the first time, the report measured shadow AI specifically.

One in five of the breached organisations — 20 per cent — had a breach that involved shadow AI. The use of unsanctioned AI tools by employees, without IT or management approval. ChatGPT, Claude, Gemini, image generators, summary tools, the whole list.

The breaches involving shadow AI cost an average of $670,000 more than the breaches that did not. Customer personal information was exposed in 65 per cent of those cases, against 53 per cent for breaches generally. Among the organisations breached through their AI systems, 97 per cent had no proper controls on what employees could feed in.

These are large company numbers. The pattern they describe is not.

Why this happens in small businesses

Three reasons, all of them ordinary.

The tool genuinely helps. ChatGPT really does cut a forty-minute task to ten minutes. The productivity gain is not imaginary. People who find it stop wanting to go back.

Nobody told them not to. Most small businesses do not have an "AI policy" because most small businesses do not have a CTO. The boss has not thought about it. The team figured it out alone.

The risk is invisible. When Janet pastes a customer's email into ChatGPT, nothing visibly goes wrong. The reply comes back. The customer is happy. There is no warning siren when the customer's name leaves your business.

What is actually at risk

Three things, in plain language.

The customer's information leaves your business. Once it is in a free-tier ChatGPT or Claude account, you no longer control where it goes, how long it is kept, or whether it is used to train the next version of the tool. The major AI companies have changed their policies on this several times. The honest answer is you cannot promise your customer where their information ended up.

Your competitor could end up with it. This is the worst-case scenario, and it is rare. But once your customer database has been pasted into a public AI tool, you have no way to prove it did not leak somewhere it should not have. If a customer ever asks "how do you protect my data?" you do not have a clean answer.

The customer who matters next year cares. The bigger client you want — the one who pays better, the one who runs procurement properly, the one who could double your revenue — will ask. They will want to know what AI tools your team uses. They will want to know what controls you have. If your answer is "I am not sure," you do not get the contract.

Why banning it does not work

Samsung tried this in 2023. After their engineers leaked semiconductor source code into ChatGPT, they banned all generative AI tools internally. The ban lasted weeks. Employees switched to personal phones, used their friends' accounts, found browser-based tools the firewall did not see. Samsung quietly reversed the ban and started building an internal alternative.

The lesson scales down to small business cleanly. If the tool genuinely helps people do their jobs faster, banning it pushes the use underground. The exposure does not stop. It becomes harder to see.

What to do this week

Five steps. None of them requires technology you do not already have.

- Ask kindly, not as an interrogation. Tell the team you are curious what AI tools they have been using. Make clear nobody is in trouble. You are trying to understand what is working and what to be careful about. Most people will tell you, because they are proud they figured it out.

- Decide what is okay and what is not. Drafting a generic reply: probably fine. Pasting a customer's name, phone, email, payment information, or medical detail: not fine. Summarising a long email from a supplier: probably fine. Pasting a client's contract: not fine. The rule of thumb: nothing identifying anyone, nothing confidential, nothing financial.

- Pick one approved tool. Not five. One. Most small businesses can land on either ChatGPT or Claude. Both have paid plans (around $20 per person per month) that do not train on your data. The free versions can. If your team is doing real work with these tools, the paid version is worth it.

- Write a one-paragraph rule. Not a five-page policy. Something you can pin in the kitchen. "We use [tool] for drafting replies and summarising. We do not paste customer names, phone numbers, payment details, or anything confidential. If you are not sure, ask before you paste." That is enough.

- Revisit in three months. This is moving fast. The rule that works today will need adjustment. Set a calendar reminder.

What this is really about

You are not trying to stop your team from using AI. You are trying to know what they are using, give them a safe way to use it, and make sure that when a customer or a bigger client asks "how do you handle data?" you have an answer that is not a shrug.

The IBM number — $670,000 in extra cost for breaches involving shadow AI — is for organisations large enough to have CISOs and breach response teams. For a small business, the equivalent damage is a customer telling other customers. It is the bigger contract you do not get because you could not answer the procurement question. It is the slow erosion of trust you cannot see until it is gone.

Janet is not the problem. The fact that nobody talks to Janet is the problem. Talk to Janet this week.

In the next post, we look at the agencies pitching custom AI builds in two weeks for $5,000 — what they are usually skipping, and the five questions to ask before signing.